Every company's security stack trusts Microsoft, Amazon, Google, and GitHub because, well, it has to. Blocking *.servicebus.windows.net breaks Azure Event Hubs. Blocking api.github.com breaks your CI pipeline. Blocking *.s3.amazonaws.com breaks half the applications in your environment. Attackers know this, and they've built an entire playbook around abusing that implicit trust.

Let's discuss three techniques that exploit this same trust problem, and how they work differently and sit on a spectrum. Each one strips away one more thing defenders can grab onto. We've covered pieces of this in previous posts on layered C2 infrastructure and Azure Relay abuse. This post ties those together and adds a third technique that removes attacker infrastructure from the equation entirely.

Technique 1: Domain Fronting and CDN Abuse

Domain fronting hides your C2 server behind a CDN's routing infrastructure. The implant makes an HTTPS connection to a high reputation CDN domain, something like d1a2b3c4.cloudfront.net or an azureedge.net subdomain. At the TLS layer, the Server Name Indication (SNI) shows the legitimate CDN domain. But inside the encrypted HTTP request, the Host header points to the attacker's backend server. The CDN reads that inner header and routes the traffic to the attacker's origin.

To a defender reviewing network logs, this looks like a standard HTTPS connection to a CDN. The destination IP belongs to Amazon, Microsoft, or Fastly. The TLS certificate is valid and issued by the CDN provider. The only anomaly is a mismatch between the SNI value and the Host header, and you need TLS inspection to see that.

CISA's red team used this during their 2024 assessment of a US critical infrastructure organization (AA24-326A). They used CDN based redirectors and domain fronting to diversify C2 communications and make outbound traffic look like it was headed to legitimate domains. In my C2 architecture post, we covered how this fits into a layered design. CDN endpoints feeding into a centralized redirector, which tunnels traffic to the actual C2 server through a reverse port forward.

Researchers at Georgia Tech tested 30 CDN providers and found that 22 still allowed the SNI/Host header mismatch that makes domain fronting work. Cloudfront and Cloudflare had mitigated it. Fastly and Akamai had not at the time of the study. CDN selection isn't just about reputation and availability, it's about what each provider's TLS implementation actually permits.

The C2 server still exists somewhere. The CDN origin configuration points to it. SNI/Host header mismatches are a known hunting angle, though they require TLS inspection at scale. Anomalous CDN traffic patterns like a workstation that starts making heavy requests to a CloudFront distribution it has no business talking to can also surface this.

The attacker's infrastructure exists. It's just hidden behind routing. If the right thread is pulled, it can be found.

Technique 2: Cloud Service Tunneling

Cloud service tunneling tunnels arbitrary TCP traffic through legitimate cloud service infrastructure, hiding the C2 server behind a cloud provider's own service mesh. Instead of using a CDN as a routing layer, this technique abuses cloud services designed to relay or broker connections. A current example is Azure Relay Bridge, which I broke down in my previous post.

Azure Relay Bridge establishes outbound TLS connections to *.servicebus.windows.net endpoints, then dynamically routes traffic through *.cloudapp.azure.com domains. It tunnels arbitrary TCP traffic (like SSH, RDP, custom implants) through Microsoft's own infrastructure. These connections are indistinguishable from legitimate Azure service traffic because they technically are legitimate Azure service traffic.

The attacker's C2 server still exists on the other end of the tunnel, but the traffic path runs entirely through Microsoft's infrastructure. There's no CDN header mismatch to hunt for. The TLS certificates belong to Microsoft. The destination IPs are Microsoft Azure addresses. Service Bus connections happen thousands of times a day in any enterprise environment running Azure.

Defenders have less to grab onto than with domain fronting. There's no header mismatch. No suspicious CDN origin to trace. You'd need TLS inspection and behavioral analysis of Service Bus usage. Like which hosts are establishing Service Bus connections, whether those connections are expected, whether the traffic volume and timing match what those systems normally do. The dynamic *.cloudapp.azure.com subdomains make IOC based blocking a losing game since each connection can spin up a new one.

The attacker's infrastructure still exists, but it's wrapped inside a cloud service's own relay mechanism. No header trick to catch. You would need to know what normal Service Bus usage looks like in your environment and spot what doesn't fit.

Technique 3: Dead Drops

Dead drops remove attacker owned infrastructure from the C2 communication path entirely. Both the attacker and the implant independently read and write to a cloud service the target network already trusts. There's no direct connection between them. The cloud service is just a shared mailbox.

The attacker writes a command to a GitHub Issue, a Google Sheet cell, an S3 object, or a cloud hosted document. The implant polls that service on a schedule, reads the command, executes it, and writes the output back. The traffic from the compromised host goes to api.github.com or sheets.googleapis.com, the same endpoints that legitimate developer tools and business applications hit every day. Using valid TLS certificates, legitimate SDKs, and real user agent strings.

APT29 ran C2 through Dropbox and Google Drive. APT41 used Google Sheets and Google Calendar to issue commands. CloudSorcerer embedded encoded C2 instructions in a GitHub profile page. When Kaspersky burned them on it, they pivoted to LiveJournal and Quora profiles in a subsequent campaign. Scattered Spider exfiltrated data to GitHub repos and S3 buckets using S3 Browser, a legitimate AWS client.

From an offensive standpoint, there's no server to find. The attacker accesses the same cloud service from their own machine, through a VPN or Tor, writes a command, and walks away. The implant picks it up on the next poll. If the cloud account gets burned, spin up a new one and update the config. The whole C2 channel is disposable and the only thing it's attributable to is the cloud provider.

There is almost nothing through traditional network analysis for defenders to find. No malicious domain, no suspicious destination, no infrastructure to trace back to. Detection is purely behavioral:

- Which hosts are making API calls to services they have no business using. A finance workstation polling GitHub Issues is a different story than a CI runner.

- Whether S3 access patterns from a given host match expected workflows, or you're seeing GetObject/PutObject activity against prefixes no known job touches.

- Whether API call timing looks like a person or a script beaconing every 90 seconds.

- Whether new OAuth tokens or app consents are appearing against cloud services without a corresponding change request.

There's no attacker infrastructure in the loop. You can't find what doesn't exist. The only path is knowing what normal cloud API usage looks like per host and catching what deviates.

The Spectrum

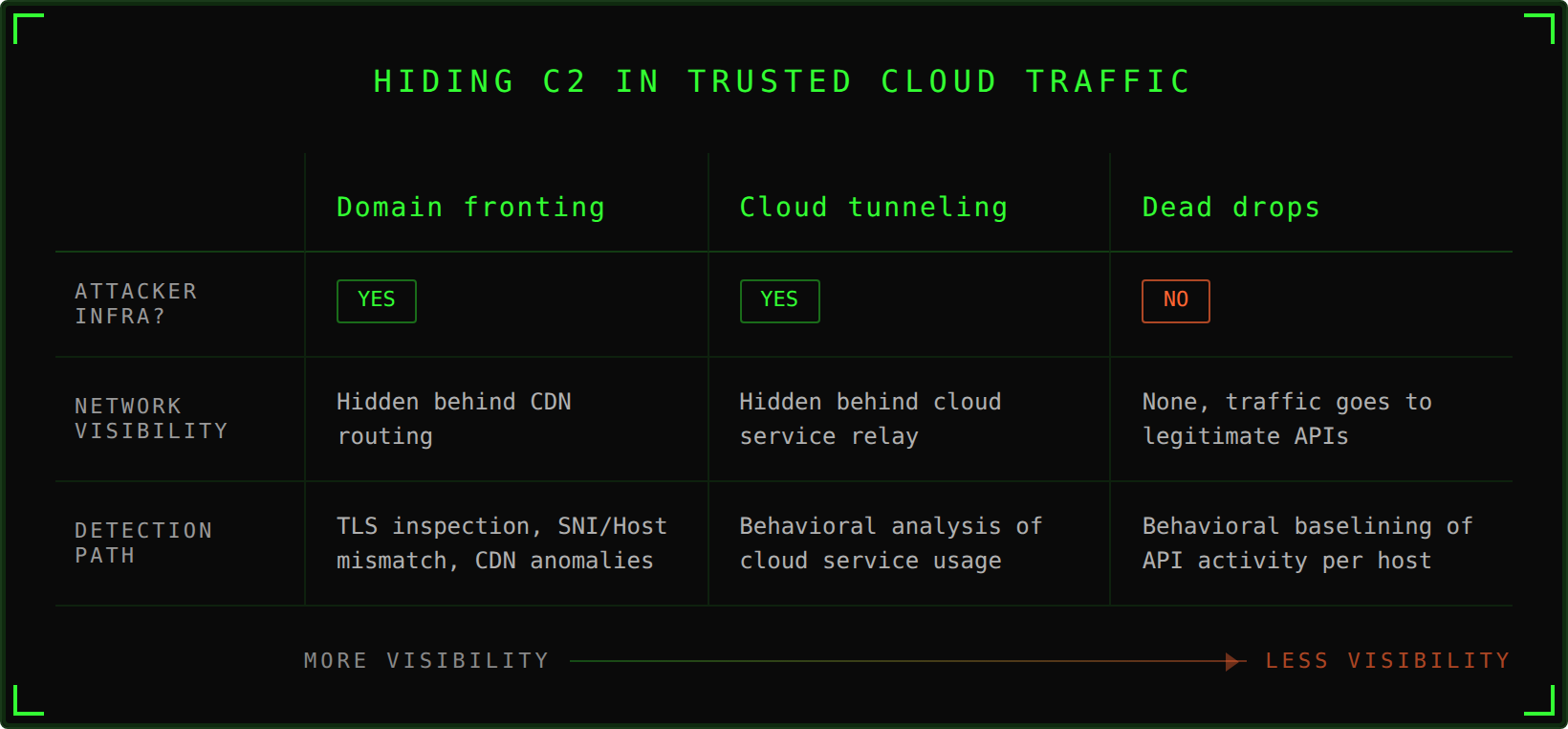

Each technique strips away one more thing for defenders to work with:

| Technique | Attacker Infra Exists? | Visible to Network Analysis? | Primary Detection Path |

|---|---|---|---|

| Domain Fronting | Yes | Hidden behind CDN routing | TLS inspection, SNI/Host mismatch, CDN traffic anomalies |

| Cloud Tunneling | Yes | Hidden behind cloud service relay | Behavioral analysis of cloud service usage patterns |

| Dead Drops | No | No, traffic goes to legitimate APIs | Behavioral baselining of cloud API activity per host |

Domain fronting hides the server, cloud tunneling hides the traffic, and dead drops remove the server entirely.

So What Actually Helps?

The answer is the same across all three: you need to know what normal looks like for your environment. Not your network in aggregate. The granular, per system, per role, per service account kind of baseline.

A lot of orgs don't have that baseline. A lot of them don't even know which systems are talking to these APIs. The baseline answers the questions of whether the behavior makes sense for the system generating it.

Getting and maintaining that baseline is a whole production in itself. It's not something you set up once and forget about. Environments change, new services get adopted, teams spin up new workflows, and what was normal five months ago might not be normal today. More to come on that.

References

- CISA Advisory AA24-326A: Enhancing Cyber Resilience: Insights from CISA Red Team Assessment of a U.S. Critical Infrastructure Sector Organization. https://www.cisa.gov/news-events/cybersecurity-advisories/aa24-326a

- Subramani, K., Perdisci, R., & Skafidas, P. (2023). Measuring CDNs Susceptible to Domain Fronting. arXiv:2310.17851. https://arxiv.org/abs/2310.17851

- Kaspersky GReAT. CloudSorcerer Analysis. https://securelist.com/cloudsorcerer-new-apt-cloud-actor/113056/

- Hacker Hermanos. When Azure Relay Becomes a Red Teamer's Highway. https://hackerhermanos.com/posts/azure-relay-red-teamer/

- Hacker Hermanos. Layered Red Team C2 Infrastructure: Architecture and Trust Boundaries. https://hackerhermanos.com/posts/red-team-c2-infrastructure/